Kubernetes: PLEGの問題でReady / NotReady間のノードフラッピング

問題を提出していただきありがとうございます! ボタンを押す前に、これらの質問に答えてください。

これは助けを求めるものですか? 番号

これを提出する前に、Kubernetesの問題でどのキーワードを検索しましたか? (重複を見つけた場合は、代わりにそこに返信する必要があります。):PLEG NotReady kubelet

これはバグレポートですか、それとも機能リクエストですか? バグ

これがバグレポートの場合は、次のことを行ってください。-以下のテンプレートをできるだけ多く記入してください。 あなたが情報を省略した場合、私たちもあなたを助けることはできません。 これが機能要求である場合は、次のことを行ってください。-表示したい機能/動作/変更を*詳細*に説明してください。 どちらの場合も、フォローアップの質問に備えて、タイムリーに回答してください。 バグを再現できない場合、または機能がすでに存在すると思われる場合は、問題を解決する可能性があります。 間違っている場合は、お気軽に再度開いて理由を説明してください。Kubernetesバージョン( kubectl version ):1.6.2

環境:

- クラウドプロバイダーまたはハードウェア構成:AWS上のCoreOS

- OS (例:/ etc / os-releaseから):CoreOS1353.7.0

- カーネル(例:

uname -a):4.9.24-coreos - ツールのインストール:

- その他:

何が起こったのか:

私は3人の労働者のクラスターを持っています。 2つ、場合によっては3つすべてのノードがNotReadyドロップし続け、 journalctl -u kubelet次のメッセージが表示されます。

May 05 13:59:56 ip-10-50-20-208.ec2.internal kubelet[2858]: I0505 13:59:56.872880 2858 kubelet_node_status.go:379] Recording NodeNotReady event message for node ip-10-50-20-208.ec2.internal

May 05 13:59:56 ip-10-50-20-208.ec2.internal kubelet[2858]: I0505 13:59:56.872908 2858 kubelet_node_status.go:682] Node became not ready: {Type:Ready Status:False LastHeartbeatTime:2017-05-05 13:59:56.872865742 +0000 UTC LastTransitionTime:2017-05-05 13:59:56.872865742 +0000 UTC Reason:KubeletNotReady Message:PLEG is not healthy: pleg was last seen active 3m7.629592089s ago; threshold is 3m0s}

May 05 14:07:57 ip-10-50-20-208.ec2.internal kubelet[2858]: I0505 14:07:57.598132 2858 kubelet_node_status.go:379] Recording NodeNotReady event message for node ip-10-50-20-208.ec2.internal

May 05 14:07:57 ip-10-50-20-208.ec2.internal kubelet[2858]: I0505 14:07:57.598162 2858 kubelet_node_status.go:682] Node became not ready: {Type:Ready Status:False LastHeartbeatTime:2017-05-05 14:07:57.598117026 +0000 UTC LastTransitionTime:2017-05-05 14:07:57.598117026 +0000 UTC Reason:KubeletNotReady Message:PLEG is not healthy: pleg was last seen active 3m7.346983738s ago; threshold is 3m0s}

May 05 14:17:58 ip-10-50-20-208.ec2.internal kubelet[2858]: I0505 14:17:58.536101 2858 kubelet_node_status.go:379] Recording NodeNotReady event message for node ip-10-50-20-208.ec2.internal

May 05 14:17:58 ip-10-50-20-208.ec2.internal kubelet[2858]: I0505 14:17:58.536134 2858 kubelet_node_status.go:682] Node became not ready: {Type:Ready Status:False LastHeartbeatTime:2017-05-05 14:17:58.536086605 +0000 UTC LastTransitionTime:2017-05-05 14:17:58.536086605 +0000 UTC Reason:KubeletNotReady Message:PLEG is not healthy: pleg was last seen active 3m7.275467289s ago; threshold is 3m0s}

May 05 14:29:59 ip-10-50-20-208.ec2.internal kubelet[2858]: I0505 14:29:59.648922 2858 kubelet_node_status.go:379] Recording NodeNotReady event message for node ip-10-50-20-208.ec2.internal

May 05 14:29:59 ip-10-50-20-208.ec2.internal kubelet[2858]: I0505 14:29:59.648952 2858 kubelet_node_status.go:682] Node became not ready: {Type:Ready Status:False LastHeartbeatTime:2017-05-05 14:29:59.648910669 +0000 UTC LastTransitionTime:2017-05-05 14:29:59.648910669 +0000 UTC Reason:KubeletNotReady Message:PLEG is not healthy: pleg was last seen active 3m7.377520804s ago; threshold is 3m0s}

May 05 14:44:00 ip-10-50-20-208.ec2.internal kubelet[2858]: I0505 14:44:00.938266 2858 kubelet_node_status.go:379] Recording NodeNotReady event message for node ip-10-50-20-208.ec2.internal

May 05 14:44:00 ip-10-50-20-208.ec2.internal kubelet[2858]: I0505 14:44:00.938297 2858 kubelet_node_status.go:682] Node became not ready: {Type:Ready Status:False LastHeartbeatTime:2017-05-05 14:44:00.938251338 +0000 UTC LastTransitionTime:2017-05-05 14:44:00.938251338 +0000 UTC Reason:KubeletNotReady Message:PLEG is not healthy: pleg was last seen active 3m7.654775919s ago; threshold is 3m0s}

dockerデーモンは問題ありdocker ps (ローカルdocker imagesなどはすべて機能し、すぐに応答します)。

kubectl apply -f https://git.io/weave-kube-1.6介してインストールされたウィーブネットワークを使用する

あなたが起こると期待したこと:

準備ができているノード。

それを再現する方法(可能な限り最小限かつ正確に):

方法を知っていたらいいのに!

私たちが知る必要がある他のこと:

インターネットへのNATゲートウェイを備えた同じプライベートサブネット上のすべてのノード(ワーカーとマスター)。 マスターセキュリティグループからの無制限のアクセス(すべてのポート)を許可するセキュリティグループのワーカー。 マスターは、同じサブネットからのすべてのポートを許可します。 プロキシはワーカーで実行されています。 apiserver、コントローラーマネージャー、マスターのスケジューラー。

kubectl logsとkubectl execは、マスター自体から(または外部から)実行した場合でも、常にハングします。

全てのコメント225件

@deitch 、ノードで実行されていたコンテナの数は? ノードの全体的なCPU使用率はどれくらいですか?

基本的になし。 kube-dns、weave-net、weave-npc、および3つのテンプレートサンプルサービス。 2つには画像がなく、クリーンアップされる予定だったため、実際には1つだけです。 AWSm4.2xlarge。 リソースの問題ではありません。

最終的にノードを破棄して再作成する必要がありました。 破棄/再作成してからPLEGメッセージはなく、50%問題ないようです。 彼らはReadyですが、それでもkubectl execまたはkubectl logsを許可することを拒否します。

PLEGが実際に何であるかについてのドキュメントを見つけるのに本当に苦労しましたが、もっと重要なのは、それ自体のログと状態をチェックしてデバッグする方法です。

うーん...謎に追加するために、どのコンテナもホスト名を解決できません、そしてkubednsは以下を与えます:

E0505 17:30:49.412272 1 reflector.go:199] pkg/dns/config/sync.go:114: Failed to list *api.ConfigMap: Get https://10.200.0.1:443/api/v1/namespaces/kube-system/configmaps?fieldSelector=metadata.name%3Dkube-dns&resourceVersion=0: dial tcp 10.200.0.1:443: getsockopt: no route to host

E0505 17:30:49.412285 1 reflector.go:199] pkg/dns/dns.go:148: Failed to list *api.Service: Get https://10.200.0.1:443/api/v1/services?resourceVersion=0: dial tcp 10.200.0.1:443: getsockopt: no route to host

E0505 17:30:49.412272 1 reflector.go:199] pkg/dns/dns.go:145: Failed to list *api.Endpoints: Get https://10.200.0.1:443/api/v1/endpoints?resourceVersion=0: dial tcp 10.200.0.1:443: getsockopt: no route to host

I0505 17:30:51.855370 1 logs.go:41] skydns: failure to forward request "read udp 10.100.0.3:60364->10.50.0.2:53: i/o timeout"

FWIW、 10.200.0.1は内部のkube apiサービス、 10.200.0.5はDNS、 10.50.20.0/24と10.50.21.0/24はマスターとワーカーが存在するサブネット(2つの別々のAZ)です実行します。

ネットワーキングで本当にfubarなものはありますか?

ネットワーキングで本当にfubarなものはありますか?

@bborehamは、 https://github.com/weaveworks/weave/issues/2736で説明されているように、 IPALLOC_RANGE=10.100.0.0/16追加された標準の織り方

@deitch plegは、kubeletがノード内のポッドを定期的に一覧表示して、正常性を確認し、キャッシュを更新するためのものです。 plegタイムアウトログが表示される場合は、DNSに関連していない可能性がありますが、kubeletのdockerへの呼び出しがタイムアウトであるためです。

ありがとう@ qiujian16 。 問題は解消されたようですが、確認方法がわかりません。 Docker自体は正常に見えました。 それがネットワーキングプラグインである可能性があるかどうか疑問に思いましたが、それはkubelet自体に影響を与えるべきではありません。

ここで、ペストの健康状態と状態を確認するためのヒントを教えてください。 その後、問題が再発するまでこれを閉じることができます。

@deitch plegは「ポッドライフサイクルイベントジェネレータ」の略で、kubeletの内部コンポーネントであり、そのステータスを直接確認できるとは思いません。(https://github.com/kubernetes/community/blob/master /contributors/design-proposals/pod-lifecycle-event-generator.md)

kubeletバイナリの内部モジュールですか? それは別のスタンドアロンコンテナ(docker、runc、cotnainerd)ですか? スタンドアロンのバイナリですか?

基本的に、kubeletがPLEGエラーを報告した場合、それらのエラーが何であるかを調べて、そのステータスを確認し、試行して複製することは非常に役立ちます。

それは内部モジュールです

@deitchは、Dockerの応答性が低い場合があり、PLEGがしきい値を逃した可能性があります。

すべてのノードで同様の問題が発生していますが、作成したばかりのクラスターが1つあります。

ログ:

kube-worker03.foo.bar.com kubelet[3213]: E0511 19:00:59.139374 3213 remote_runtime.go:109] StopPodSandbox "12c6a5c6833a190f531797ee26abe06297678820385b402371e196c69b67a136" from runtime service failed: rpc error: code = 4 desc = context deadline exceeded

May 11 19:00:59 kube-worker03.foo.bar.com kubelet[3213]: E0511 19:00:59.139401 3213 kuberuntime_gc.go:138] Failed to stop sandbox "12c6a5c6833a190f531797ee26abe06297678820385b402371e196c69b67a136" before removing: rpc error: code = 4 desc = context deadline exceeded

May 11 19:01:04 kube-worker03.foo.bar.com kubelet[3213]: E0511 19:01:04.627954 3213 pod_workers.go:182] Error syncing pod 1c43d9b6-3672-11e7-a6da-00163e041106

("kube-dns-4240821577-1wswn_kube-system(1c43d9b6-3672-11e7-a6da-00163e041106)"), skipping: rpc error: code = 4 desc = context deadline exceeded

May 11 19:01:18 kube-worker03.foo.bar.com kubelet[3213]: E0511 19:01:18.627819 3213 pod_workers.go:182] Error syncing pod 1c43d9b6-3672-11e7-a6da-00163e041106

("kube-dns-4240821577-1wswn_kube-system(1c43d9b6-3672-11e7-a6da-00163e041106)"),

skipping: rpc error: code = 4 desc = context deadline exceeded

May 11 19:01:21 kube-worker03.foo.bar.com kubelet[3213]: I0511 19:01:21.627670 3213 kubelet.go:1752] skipping pod synchronization - [PLEG is not healthy: pleg was last seen active 3m0.339074625s ago; threshold is 3m0s]

Dockerをダウングレードし、事実上すべてを再起動しても無駄になりました。ノードはすべてpuppetを介して管理されているため、完全に同一であると期待しています。何が問題なのかわかりません。 デバッグモードのDockerログは、これらのリクエストを取得していることを示しています

@bjhaidネットワーキングに何を使用していますか? 当時、私はいくつかの興味深いネットワークの問題を見ていました。

@deitch weaveですが、kubeletとdockerの間の通信の問題のようであるため、これはネットワーク関連の問題ではないと思います。 dockerのデバッグログを介して、dockerがkubeletからこれらのリクエストを取得していることを確認できます

私のPlegの問題はなくなったように見えますが、次にこれらのクラスターを新たにセットアップするまで(すべて私が構築したテラフォームモジュールを介して)自信が持てません。

織りの問題が存在するか、k8s / dockerの可能性があります。

@deitch Plegの問題を解決するために何かしましたか、それとも魔法が起こりましたか?

実際にはホスト名の解決です。コントローラーは新しく作成されたノードのホスト名を解決できませんでした。ノイズが発生して申し訳ありません。

私は問題がないことをすぐに報告しました、問題はまだ存在します、私は何かを見つけたら探し続けて報告します

この問題はweave-kubeに関連していると思います。同じ問題が発生しました。今回は、クラスターを再作成せずに問題を解決するために、織りを削除して再適用する必要がありました(伝播するためにノードを再起動します)。削除順序)...そして戻ってきました

だから私はそれがweave-kube-1.6によるものであると確信している理由や方法がわかりません

ここに戻るのを忘れました。問題は、ウィーブインターフェイスが起動しないため、コンテナーにネットワークがないことが原因でした。ただし、これは、ファイアウォールがウィーブデータとvxlanポートをブロックしているためで、このポートを開くと問題はありませんでした。

私が抱えていた問題は2つあり、おそらく関連していました。

- ペスト。 それらはなくなったと思いますが、完全に自信を持って十分なクラスターを再作成していません。 私はそれを実現するために_直接_変更したとは思いません。

- コンテナが何にも接続できないという織り方の問題。

不審なことに、plegのすべての問題は、ウィーブネットワークの問題と同時に発生しました。

Bryan @ weaveworksは、coreosの問題を指摘してくれました。 CoreOSは、ブリッジ、ベス、基本的にすべてを管理しようとするかなり積極的な傾向があります。 loと実際にはホスト上の物理インターフェイスを除いて、CoreOSがそれを実行できないようにすると、すべての問題が残りました。

人々はまだcoreosの実行に問題を抱えていますか?

私たちは先月かそこらでこれらの問題に悩まされてきました(クラスターを1.5.xから1.6.xにアップグレードした後に言いたいです)そしてそれは同じように不思議です。

私たちはawsでweave、debian jessie AMIを実行しており、クラスターはPLEGが正常ではないと判断することがあります。

この場合、ポッドはポイントを使用して正常に起動しているため、織りは問題ないようです。

私たちが指摘したことの1つは、すべてのレプリカを縮小すると問題は解決するように見えることですが、展開とステートフルセットの拡大を開始すると、特定の数のコンテナーの周りでこれが発生します。 (少なくとも今回は)。

docker ps; Docker情報はノード上で問題ないようです。

リソース使用率はわずかです:5%cpu util、1.5 / 8gbのRAMが使用され(root htopによる)、ノードリソースプロビジョニングの合計は約30%であり、スケジュールされているはずのすべてのものがスケジュールされています。

これについてはまったく頭を悩ませることはできません。

PLEGチェックがもう少し冗長になっていることを心から願っています。ビープ音が何をしているのかについて、実際に詳細なドキュメントがありました。これについては、膨大な数の問題が未解決であるように思われ、誰もそれが何であるかを実際には知らないためです。重要なモジュールです。失敗したと見なされるチェックを再現できるようにしたいと思います。

私はペストの神秘性についての考えを2番目にしています。 しかし、私の側では、クライアントのために多くの作業を行った後、coreosとそのネットワークでの誤動作を安定させることが大いに役立ちました。

PLEGヘルスチェックはほとんど行いません。 すべての反復で、 docker psを呼び出してコンテナーの状態の変化を検出し、 docker psとinspectを呼び出してそれらのコンテナーの詳細を取得します。

各反復が終了すると、タイムスタンプが更新されます。 タイムスタンプがしばらく(つまり3分間)更新されていない場合、ヘルスチェックは失敗します。

PLEGが3分でこれらすべてを完了できない膨大な数のポッドがノードにロードされていない限り(これは発生しないはずです)、最も可能性の高い原因はDockerが遅いことです。 たまにdocker ps小切手でそれを観察できないかもしれませんが、それはそれがないという意味ではありません。

「不健康」ステータスを公開しないと、ユーザーから多くの問題が隠され、さらに多くの問題が発生する可能性があります。 たとえば、kubeletは変更にタイムリーに反応せず、さらに混乱を招きます。

これをよりデバッグ可能にする方法に関する提案を歓迎します...

PLEGの不健康な警告が発生し、ノードのヘルスステータスがフラッピングします:k8s 1.6.4 withweave。 (それ以外は同一の)ノードのサブセットにのみ表示されます。

私たちの場合、ContainerCreatingでスタックしているフラッピングワーカーとポッドは、マスターとワーカー間、およびワーカー間のウィーブトラフィックを許可しないEC2インスタンスのセキュリティグループの問題でした。 そのため、ノードが正しく起動できず、NotReadyでスタックしました。

kuberrnetes 1.6.4

適切なセキュリティグループがあれば、今は機能します。

私はこの設定でこの問題のようなものを経験しています...

Kubernetesバージョン(kubectlバージョンを使用):1.6.4

環境:

クラウドプロバイダーまたはハードウェア構成:単一のSystem76サーバー

OS(例:/ etc / os-releaseから):Ubuntu 16.04.2 LTS

カーネル(例:uname -a):Linux system76-server 4.4.0-78-generic#99-Ubuntu SMP Thu Apr 27 15:29:09 UTC 2017 x86_64 x86_64 x86_64 GNU / Linux

ツールのインストール:kubeadm + weave.works

これは単一ノードのクラスターであるため、この問題の私のバージョンはセキュリティグループやファイアウォールに関連しているとは思いません。

クラスタを起動したばかりの場合は、セキュリティグループの問題は理にかなっています。 しかし、私たちが目にしているこれらの問題は、セキュリティグループが配置された状態で数か月間実行されているクラスターにあります。

GKEでkubeletバージョン1.6.2を実行しているときに、似たようなことが起こりました。

ノードの1つが準備完了状態に移行し、そのノードのkubeletログに2つの苦情がありました。1つはPLEGステータスチェックが失敗したこと、もう2つは興味深いことに画像リスト操作が失敗したことです。

画像関数の呼び出しが失敗したいくつかの例。

image_gc_manager.go:176

kuberuntime_image.go:106

remote_image.go:61

私が想定しているのは、dockerデーモンの呼び出しです。

これが起こっているとき、私はディスクIOスパイク、特に読み取り操作をたくさん見ました。 〜50kb / sマークから8mb / sマークまで。

約30〜45分後に自動的に修正されましたが、IOの増加を引き起こしたのは画像GCスイープだったのでしょうか。

すでに述べたように、PLEGはdockerデーモンを介してポッドを監視します。これが多くの操作を実行している場合、PLEGチェックをキューに入れることができますか?

1.6.4および1.6.6(GKE上)でこの問題が発生し、結果としてNotReadyがフラッピングします。 これはGKEで利用可能な最新バージョンであるため、修正を次の1.6リリースにバックポートしてもらいたいと思います。

興味深い点の1つは、PLEGが最後にアクティブであると見なされた時刻は変更されず、常に_巨大な数値であるということです(おそらく、格納されているタイプの制限にあります)。

[container runtime is down PLEG is not healthy: pleg was last seen active 2562047h47m16.854775807s ago; threshold is 3m0s]

[コンテナのランタイムがダウンしていますPLEGは正常ではありません:plegは2562047h47m16.854775807s前にアクティブであることが最後に確認されました。 しきい値は3m0sです]

@bergman私はこれを見たことがありませんが、もしそうなら、あなたのノードは決して準備ができていなかっただろう。 GKEチームがさらに調査できるように、GKEチャネルを通じてこれを報告してください。

約30〜45分後に自動的に修正されましたが、IOの増加を引き起こしたのは画像GCスイープだったのでしょうか。

これは確かに可能です。 Image GCにより、dockerデーモンの応答が非常に遅くなることがありました。 30〜45分はかなり長く聞こえます。 @zoltrainは、全期間を通じて画像が削除されていました。

前のステートメントを繰り返しますが、PLEGはほとんど何もせず、dockerデーモンが応答しないため、ヘルスチェックに失敗するだけです。 PLEGヘルスチェックを通じてこの情報を表示し、ノードがコンテナ統計を取得していない(およびそれらに反応していない)ことをコントロールプレーンに通知します。 このチェックを盲目的に削除すると、より深刻な問題が隠される可能性があります。

更新するには:ウィーブとIPスライスのプロビジョニングに関連する問題が私たちの側で見つかりました。 AWSでノードを頻繁に終了するため、weaveは元々、クラスター内のノードの永続的な破壊を考慮していませんでした。その後、新しいIPが続きます。 その結果、ネットワークが正しくセットアップされないため、内部範囲に関係するものはすべて正しく起動しませんでした。

https://github.com/weaveworks/weave/issues/2970

織りを使用する人のために。

[コンテナのランタイムがダウンしていますPLEGは正常ではありません:plegは2562047h47m16.854775807s前にアクティブであることが最後に確認されました。 しきい値は3m0sです]

@bergman私はこれを見たことがありませんが、もしそうなら、あなたのノードは決して準備ができていなかっただろう。 GKEチームがさらに調査できるように、GKEチャネルを通じてこれを報告してください。

ほとんどの場合、ノードは準備完了です。 このチェックが原因でkubeletが再起動されたか、他のチェックがReadyイベントを通知していると思います。 60秒ごとに約10秒のNotReadyが表示されます。 残りの時間、ノードは準備完了です。

@yujuhong PLEG is not healthyはエンドユーザーにとって非常に混乱し、コンテナランタイムが失敗した理由や、コンテナランタイムに関する詳細など、問題の診断には役立たないと言って、PLEGログを改善できると思います。応答する方が便利です

羽ばたきは見られませんが、1.6.4と三毛猫ねこネコが織り込まれていないノードの状態は常に準備ができていません。

@yujuhong PLEGのログは改善できると思います。PLEGが正常でないことはエンドユーザーにとって非常に混乱し、コンテナランタイムが失敗した理由や、コンテナランタイムが応答しないことなどの問題の診断には役立ちません。より便利になる

承知しました。 気軽にPRを送ってください。

Dockerイメージのクリーンアップ中にこの問題が発生していました。 Dockerは忙しすぎたと思います。 画像が削除されると、通常の状態に戻ります。

同じ問題が発生しました。 その理由は、ntpdが現在の時刻を修正しているためだと思います。

v1.6.9でntpdの正しい時刻を見てきました

Sep 12 19:05:08 node-6 systemd: Started logagt.

Sep 12 19:05:08 node-6 systemd: Starting logagt...

Sep 12 19:05:09 node-6 cnrm: "Log":"2017-09-12 19:05:09.197083#011ERROR#011node-6#011knitter.cnrm.mod-init#011TransactionID=1#011InstanceID=1174#011[ObjectType=null,ObjectID=null]#011registerOir: k8s.GetK8sClientSingleton().RegisterOir(oirName: hugepage, qty: 2048) FAIL, error: dial tcp 120.0.0.250:8080: getsockopt: no route to host, retry#011[init.go]#011[68]"

Sep 12 11:04:53 node-6 ntpd[902]: 0.0.0.0 c61c 0c clock_step -28818.771869 s

Sep 12 11:04:53 node-6 ntpd[902]: 0.0.0.0 c614 04 freq_mode

Sep 12 11:04:53 node-6 systemd: Time has been changed

Sep 12 11:04:54 node-6 ntpd[902]: 0.0.0.0 c618 08 no_sys_peer

Sep 12 11:05:04 node-6 systemd: Reloading.

Sep 12 11:05:04 node-6 systemd: Configuration file /usr/lib/systemd/system/auditd.service is marked world-inaccessible. This has no effect as configuration data is accessible via APIs without restrictions. Proceeding anyway.

Sep 12 11:05:04 node-6 systemd: Started opslet.

Sep 12 11:05:04 node-6 systemd: Starting opslet...

Sep 12 11:05:13 node-6 systemd: Reloading.

Sep 12 11:05:22 node-6 kubelet: E0912 11:05:22.425676 2429 event.go:259] Could not construct reference to: '&v1.Node{TypeMeta:v1.TypeMeta{Kind:"", APIVersion:""}, ObjectMeta:v1.ObjectMeta{Name:"120.0.0.251", GenerateName:"", Namespace:"", SelfLink:"", UID:"", ResourceVersion:"", Generation:0, CreationTimestamp:v1.Time{Time:time.Time{sec:0, nsec:0, loc:(*time.Location)(nil)}}, DeletionTimestamp:(*v1.Time)(nil), DeletionGracePeriodSeconds:(*int64)(nil), Labels:map[string]string{"beta.kubernetes.io/os":"linux", "beta.kubernetes.io/arch":"amd64", "kubernetes.io/hostname":"120.0.0.251"}, Annotations:map[string]string{"volumes.kubernetes.io/controller-managed-attach-detach":"true"}, OwnerReferences:[]v1.OwnerReference(nil), Finalizers:[]string(nil), ClusterName:""}, Spec:v1.NodeSpec{PodCIDR:"", ExternalID:"120.0.0.251", ProviderID:"", Unschedulable:false, Taints:[]v1.Taint(nil)}, Status:v1.NodeStatus{Capacity:v1.ResourceList{"cpu":resource.Quantity{i:resource.int64Amount{value:4000, scale:-3}, d:resource.infDecAmount{Dec:(*inf.Dec)(nil)}, l:[]int64(nil), s:"", Format:"DecimalSI"}, "memory":resource.Quantity{i:resource.int64Amount{value:3974811648, scale:0}, d:resource.infDecAmount{Dec:(*inf.Dec)(nil)}, l:[]int64(nil), s:"", Format:"BinarySI"}, "hugePages":resource.Quantity{i:resource.int64Amount{value:1024, scale:0}, d:resource.infDecAmount{Dec:(*inf.Dec)(nil)}, l:[]int64(nil), s:"", Format:"DecimalSI"}, "pods":resource.Quantity{i:resource.int64Amount{value:110, scale:0}, d:resource.infDecAmount{Dec:(*inf.Dec)(nil)}, l:[]int64(nil), s:"", Format:"DecimalSI"}}, Allocatable:v1.ResourceList{"cpu":resource.Quantity{i:resource.int64Amount{value:3500, scale:-3}, d:resource.infDecAmount{Dec:(*inf.Dec)(nil)}, l:[]int64(nil), s:"", Format:"DecimalSI"}, "memory":resource.Quantity{i:resource.int64Amount{value:1345666048, scale:0}, d:resource.infDecAmount{Dec:(*inf.Dec)(nil)}, l:[]int64(nil), s:"", Format:"BinarySI"}, "hugePages":resource.Quantity{i:resource.int64Amount{value:1024, scale:0}, d:resource.infDecAmount{Dec:(*inf.Dec)(nil)}, l:[]int64(nil), s:"",

Sep 12 11:05:22 node-6 kubelet: Format:"DecimalSI"}, "pods":resource.Quantity{i:resource.int64Amount{value:110, scale:0}, d:resource.infDecAmount{Dec:(*inf.Dec)(nil)}, l:[]int64(nil), s:"", Format:"DecimalSI"}}, Phase:"", Conditions:[]v1.NodeCondition{v1.NodeCondition{Type:"OutOfDisk", Status:"False", LastHeartbeatTime:v1.Time{Time:time.Time{sec:63640811081, nsec:196025689, loc:(*time.Location)(0x4e8e3a0)}}, LastTransitionTime:v1.Time{Time:time.Time{sec:63640811081, nsec:196025689, loc:(*time.Location)(0x4e8e3a0)}}, Reason:"KubeletHasSufficientDisk", Message:"kubelet has sufficient disk space available"}, v1.NodeCondition{Type:"MemoryPressure", Status:"False", LastHeartbeatTime:v1.Time{Time:time.Time{sec:63640811081, nsec:196099492, loc:(*time.Location)(0x4e8e3a0)}}, LastTransitionTime:v1.Time{Time:time.Time{sec:63640811081, nsec:196099492, loc:(*time.Location)(0x4e8e3a0)}}, Reason:"KubeletHasSufficientMemory", Message:"kubelet has sufficient memory available"}, v1.NodeCondition{Type:"DiskPressure", Status:"False", LastHeartbeatTime:v1.Time{Time:time.Time{sec:63640811081, nsec:196107935, loc:(*time.Location)(0x4e8e3a0)}}, LastTransitionTime:v1.Time{Time:time.Time{sec:63640811081, nsec:196107935, loc:(*time.Location)(0x4e8e3a0)}}, Reason:"KubeletHasNoDiskPressure", Message:"kubelet has no disk pressure"}, v1.NodeCondition{Type:"Ready", Status:"False", LastHeartbeatTime:v1.Time{Time:time.Time{sec:63640811081, nsec:196114314, loc:(*time.Location)(0x4e8e3a0)}}, LastTransitionTime:v1.Time{Time:time.Time{sec:63640811081, nsec:196114314, loc:(*time.Location)(0x4e8e3a0)}}, Reason:"KubeletNotReady", Message:"container runtime is down,PLEG is not healthy: pleg was last seen active 2562047h47m16.854775807s ago; threshold is 3m0s,network state unknown"}}, Addresses:[]v1.NodeAddress{v1.NodeAddress{Type:"LegacyHostIP", Address:"120.0.0.251"}, v1.NodeAddress{Type:"InternalIP", Address:"120.0.0.251"}, v1.NodeAddress{Type:"Hostname", Address:"120.0.0.251"}}, DaemonEndpoints:v1.NodeDaemonEndpoints{KubeletEndpoint:v1.DaemonEndpoint{Port:10250}}, NodeInfo:v1.NodeS

マーク。

ここで同じ問題。

ポッドを強制終了したが、強制終了状態でスタックした場合に表示されますNormal Killing Killing container with docker id 472802bf1dba: Need to kill pod.

およびkubeletログは次のようになります。

skipping pod synchronization - [PLEG is not healthy: pleg was last seen active

k8s clusteバージョン:1.6.4

@xcompass kubelet構成に--image-gc-high-thresholdおよび--image-gc-low-thresholdフラグを使用していますか? kubelet gc dockerdeamonを忙しくしているのではないかと思います。

@alirezaDavid私はあなたと同じ問題に遭遇しました、ポッドの開始と終了が非常に遅く、ノードが時々notReadyになり、ノードでkubeletを再起動するか、dockerを再起動すると問題が解決するように見えますが、これは正しい方法ではありません。

@ yu-yang2うん、正確に、kubeletを再起動します

しかし、kubeletを再起動する前に、 docker psとsystemctl -u dockerをチェックアウトしましたが、すべてが機能しているようです。

この問題は、織りとオートスケーラーを備えたkubernetesで発生しました。 weaveには割り当てるIPアドレスがもうないことが判明しました。 これは、を実行することで検出されました。 この問題からステータスipamを織ります: https :

根本的な原因はここにあります: https :

ドキュメントはオートスケーラーとウィーブについて警告しています: //www.weave.works/docs/net/latest/operational-guide/tasks/

weave --local status ipamを実行すると、多数のIPアドレスが割り当てられた数百の使用できないノードがありました。 これは、オートスケーラーがweaveに通知せずにインスタンスを終了するために発生します。 これにより、実際に接続されたノードはほんの一握りになりました。 weave rmpeerを使用して、使用できないピアの一部をクリアしました。 これにより、iが実行していたノードがIPアドレスのグループになりました。 次に、実行中の他のウィーブノードに移動し、それらからいくつかのrmpeerコマンドも実行しました(それが必要かどうかはわかりません)。

一部のec2インスタンスを終了すると、新しいインスタンスがオートスケーラーによって起動され、すぐにIPアドレスが割り当てられました。

こんにちは皆さん。 私の場合、サンドボックスにはネットワーク名前空間がなかったため、サンドボックスの削除に関するPLEGの問題が発生しました。 https://github.com/kubernetes/kubernetes/issues/44307で説明されているその状況

私の問題は:

- ポッドが展開されました。

- ポッドが削除されました。 アプリケーションのコンテナが問題なく削除されました。 アプリケーションのサンドボックスは削除されませんでした。

- PLEGはサンドボックスをコミット/削除/終了しようとしますが、PLEGはこれを実行できず、ノードを異常としてマークします。

ご覧のとおり、このバグのすべての人が1.6。*のKubernetesを使用しています。1.7で修正する必要があります。

PS。 オリジン3.6(kubernetes 1.6)でこの状況を見ました。

こんにちは、

私は自分でPLEGの問題を抱えています(Azure、k8s 1.7.7):

Oct 5 08:13:27 k8s-agent-27569017-1 docker[1978]: E1005 08:13:27.386295 2209 remote_runtime.go:168] ListPodSandbox with filter "nil" from runtime service failed: rpc error: code = 4 desc = context deadline exceeded

Oct 5 08:13:27 k8s-agent-27569017-1 docker[1978]: E1005 08:13:27.386351 2209 kuberuntime_sandbox.go:197] ListPodSandbox failed: rpc error: code = 4 desc = context deadline exceeded

Oct 5 08:13:27 k8s-agent-27569017-1 docker[1978]: E1005 08:13:27.386360 2209 generic.go:196] GenericPLEG: Unable to retrieve pods: rpc error: code = 4 desc = context deadline exceeded

Oct 5 08:13:30 k8s-agent-27569017-1 docker[1978]: I1005 08:13:30.953599 2209 helpers.go:102] Unable to get network stats from pid 60677: couldn't read network stats: failure opening /proc/60677/net/dev: open /proc/60677/net/dev: no such file or directory

Oct 5 08:13:30 k8s-agent-27569017-1 docker[1978]: I1005 08:13:30.953634 2209 helpers.go:125] Unable to get udp stats from pid 60677: failure opening /proc/60677/net/udp: open /proc/60677/net/udp: no such file or directory

Oct 5 08:13:30 k8s-agent-27569017-1 docker[1978]: I1005 08:13:30.953642 2209 helpers.go:132] Unable to get udp6 stats from pid 60677: failure opening /proc/60677/net/udp6: open /proc/60677/net/udp6: no such file or directory

Oct 5 08:13:31 k8s-agent-27569017-1 docker[1978]: I1005 08:13:31.763914 2209 kubelet.go:1820] skipping pod synchronization - [PLEG is not healthy: pleg was last seen active 13h42m52.628402637s ago; threshold is 3m0s]

Oct 5 08:13:35 k8s-agent-27569017-1 docker[1978]: I1005 08:13:35.977487 2209 kubelet_node_status.go:467] Using Node Hostname from cloudprovider: "k8s-agent-27569017-1"

Oct 5 08:13:36 k8s-agent-27569017-1 docker[1978]: I1005 08:13:36.764105 2209 kubelet.go:1820] skipping pod synchronization - [PLEG is not healthy: pleg was last seen active 13h42m57.628610126s ago; threshold is 3m0s]

Oct 5 08:13:39 k8s-agent-27569017-1 docker[1275]: time="2017-10-05T08:13:39.185111999Z" level=warning msg="Health check error: rpc error: code = 4 desc = context deadline exceeded"

Oct 5 08:13:41 k8s-agent-27569017-1 docker[1978]: I1005 08:13:41.764235 2209 kubelet.go:1820] skipping pod synchronization - [PLEG is not healthy: pleg was last seen active 13h43m2.628732806s ago; threshold is 3m0s]

Oct 5 08:13:41 k8s-agent-27569017-1 docker[1978]: I1005 08:13:41.875074 2209 helpers.go:102] Unable to get network stats from pid 60677: couldn't read network stats: failure opening /proc/60677/net/dev: open /proc/60677/net/dev: no such file or directory

Oct 5 08:13:41 k8s-agent-27569017-1 docker[1978]: I1005 08:13:41.875102 2209 helpers.go:125] Unable to get udp stats from pid 60677: failure opening /proc/60677/net/udp: open /proc/60677/net/udp: no such file or directory

Oct 5 08:13:41 k8s-agent-27569017-1 docker[1978]: I1005 08:13:41.875113 2209 helpers.go:132] Unable to get udp6 stats from pid 60677: failure opening /proc/60677/net/udp6: open /proc/60677/net/udp6: no such file or directory

安定したCoreOSでv1.7.4+coreos.0を実行しています。 PLEGが原因で、k8sノードが8時間ごとに頻繁にダウンする(そして、dockerやkubeletサービスを再起動するまで起動しない)ことがあります。 コンテナは実行を続けますが、k8sでは不明として報告されます。 Kubesprayを使用してデプロイすることを言及する必要があります。

コンテナを一覧表示するためにdockerと通信するときのGRPCのバックオフアルゴリズムであると思われる問題を追跡しました。 このPRhttps ://github.com/moby/moby/pull/33483は、バックオフを最大2秒に変更し、17.06で利用できますが、kubernetesは1.8まで17.06をサポートしていません。

問題を引き起こしているPLEGの行はこれです。

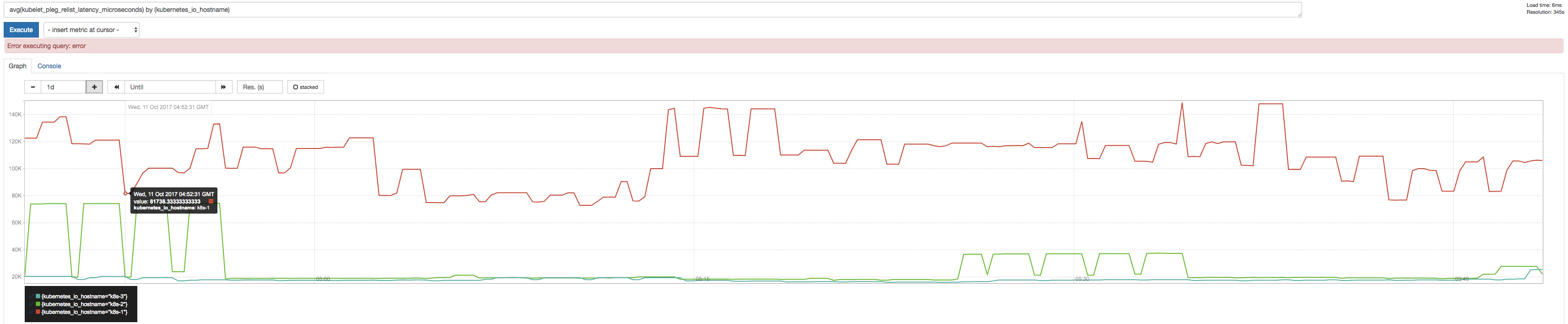

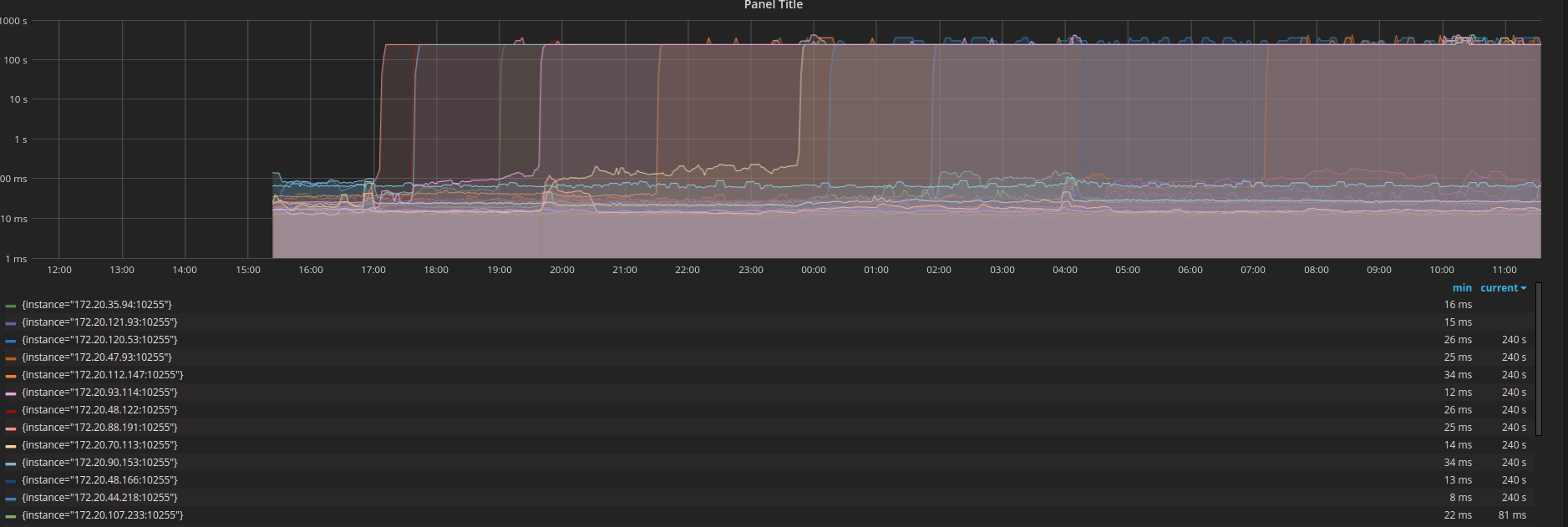

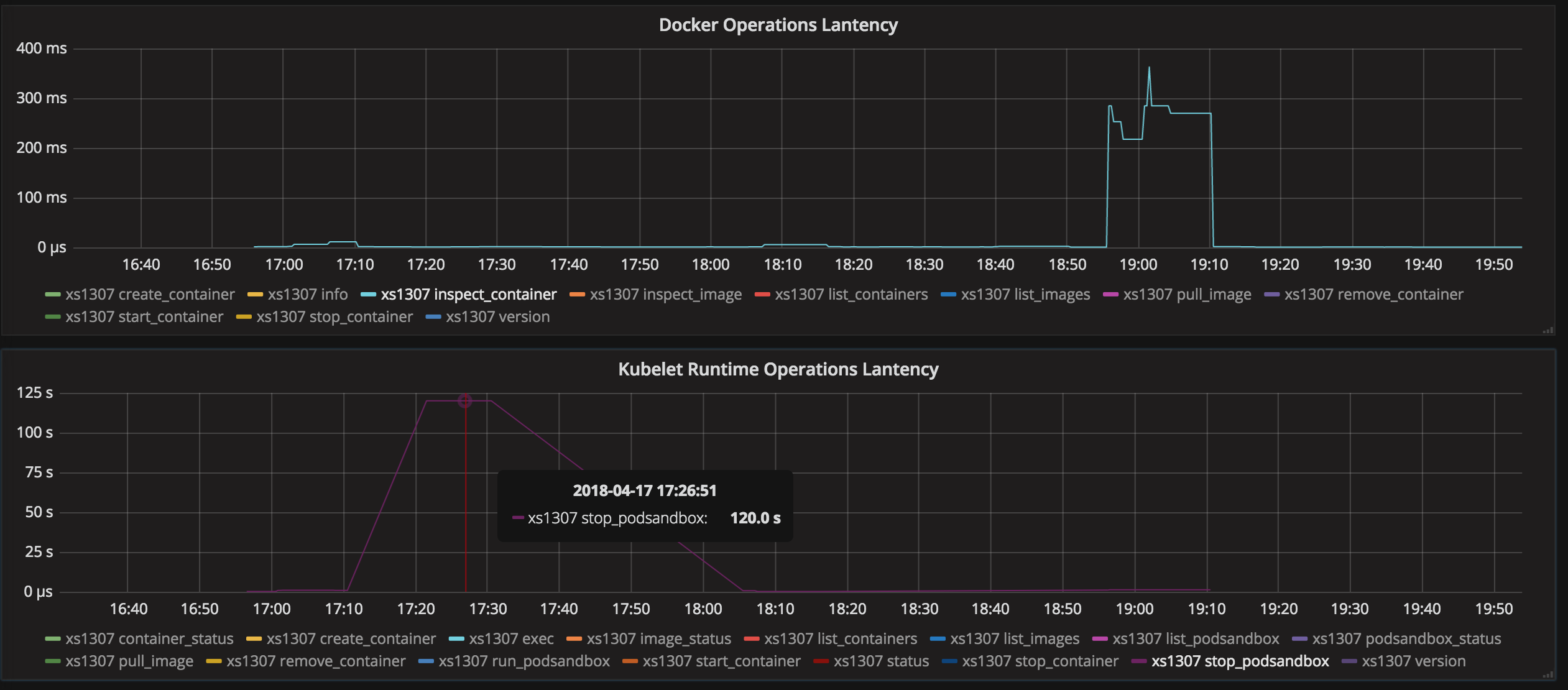

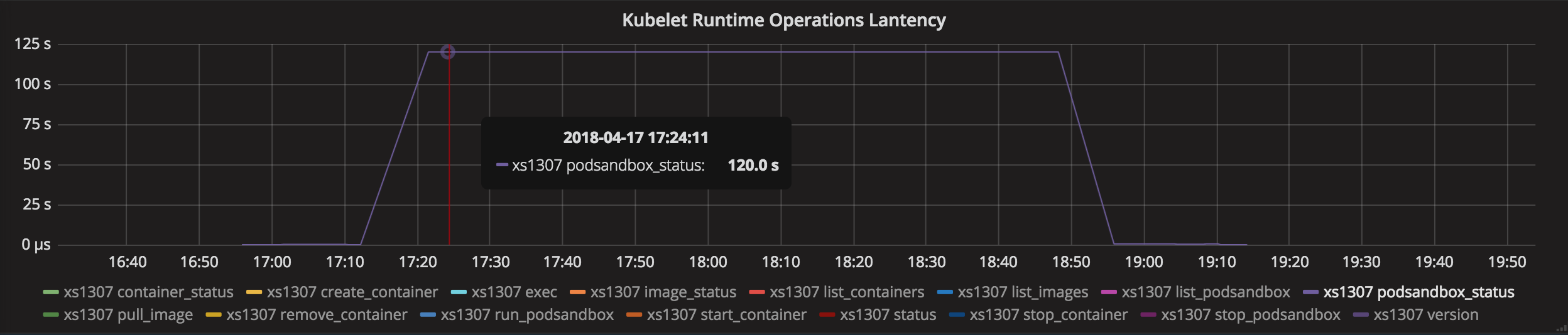

プロメテウスを使用してPLEGRelistIntervalメトリックとPLEGRelistLatencyメトリックを検査したところ、バックオフアルゴリズム理論とかなり一致する次の結果が得られました。

@ssboisenグラフで報告してくれてありがとう(彼らは面白そうに見えます)!

PLEGが原因で、k8sノードが8時間ごとに頻繁にダウンする(そして、dockerやkubeletサービスを再起動するまで起動しない)ことがあります。 コンテナは実行を続けますが、k8sでは不明として報告されます。 Kubesprayを使用してデプロイすることを言及する必要があります。

私が持っているいくつかの質問:

- dockerとkubeletのいずれかを再起動すると問題は解決しますか?

- 問題が発生した場合、

docker psは正常に応答しますか?

コンテナを一覧表示するためにdockerと通信するときのGRPCのバックオフアルゴリズムであると思われる問題を追跡しました。 このPRmoby / moby#33483は、バックオフを最大2秒に変更し、17.06で利用できますが、kubernetesは1.8まで17.06をサポートしていません。

あなたが言及したmobyの問題を調べましたが、その議論では、すべてのdocker ps呼び出しはまだ正しく機能していました(dockerd <->コンテナー接続が切断された場合でも)。 これはあなたが言及したPLEGの問題とは異なるようです。 また、kubeletはgrpcを使用してdockerdと通信しません。 それはdockershimと通信するためにgrpcを使用しますが、それらは本質的に同じプロセスであり、もう一方がまだ生きている間に一方が殺される(接続の切断につながる)という問題に遭遇するべきではありません。

grpc http grpc

kubelet <----> dockershim <----> dockerd <----> containerd

kubeletログに表示されたエラーメッセージは何ですか? 上記のコメントのほとんどには、「コンテキスト期限を超えました」というエラーメッセージがありました。

- dockerとkubeletのいずれかを再起動すると問題は解決しますか?

変更されます。ほとんどの場合、kubeletを再起動するだけで十分ですが、Dockerの再起動が必要な状況がありました。

- 問題が発生した場合、

docker psは正常に応答しますか?

PLEGが動作しているときに、ノードでdocker psを実行しても問題はありません。 私はドッカーシムについて知りませんでした、それが問題であるのはクベレットとドッカーシムの間の接続であるかどうか疑問に思います、シムは登山のバックオフにつながる時間内に答えることができませんでしたか?

ログのエラーメッセージは、次の2行の組み合わせです。

generic.go:196] GenericPLEG: Unable to retrieve pods: rpc error: code = 14 desc = grpc: the connection is unavailable

kubelet.go:1820] skipping pod synchronization - [container runtime is down PLEG is not healthy: pleg was last seen active 11h5m56.959313178s ago; threshold is 3m0s]

この問題をより適切にデバッグできるように、より多くの情報を取得する方法について何か提案はありますか?

- dockerとkubeletのいずれかを再起動すると問題は解決しますか?

はい、dockerを再起動するだけで修正されるため、k8sの問題ではありません - 問題が発生した場合、docker psは正常に応答しますか?

いいえ。 ハングします。 Dockerは、そのノードでコンテナーを実行するとハングします。

おそらく、正しいことをしているk8sではなく、Dockerの問題です。 ただし、dockerがここで誤動作している理由を見つけることができませんでした。 すべてのCPU /メモリ/ディスクリソースは素晴らしいです。

dockerserviceを再起動すると良好な状態に戻ります。

この問題をより適切にデバッグできるように、より多くの情報を取得する方法について何か提案はありますか?

最初のステップは、どのコンポーネント(dockershimまたはdocker / containerd)がエラーメッセージを返したかを確認することだと思います。

おそらく、kubeletとdockerのログを相互参照することでこれを理解できます。

おそらく、正しいことをしているk8sではなく、Dockerの問題です。 ただし、dockerがここで誤動作している理由を見つけることができませんでした。 すべてのCPU /メモリ/ディスクリソースは素晴らしいです。

うん。 あなたの場合、dockerデーモンが実際にハングしているように見えます。 Dockerデーモンをデバッグモードで起動し、発生したときにスタックトレースを取得できます。

https://docs.docker.com/engine/admin/#force -a-stack-trace-to-be-logged

@yujuhong k8sの負荷テスト後にこの問題が再び発生し、ほとんどすべてのノードがnot readyなり、ポッドを数日間クリーンアップしても回復しませんでした。すべてのkubeletで冗長モードを開き、ログを取得しました。以下では、これらのログが問題の解決に役立つことを願っています。

Oct 24 21:16:39 docker34-91 kubelet[24165]: I1024 21:16:39.539054 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 24 21:16:39 docker34-91 kubelet[24165]: I1024 21:16:39.639305 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 24 21:16:39 docker34-91 kubelet[24165]: I1024 21:16:39.739585 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 24 21:16:39 docker34-91 kubelet[24165]: I1024 21:16:39.839829 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 24 21:16:39 docker34-91 kubelet[24165]: I1024 21:16:39.940111 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 24 21:16:40 docker34-91 kubelet[24165]: I1024 21:16:40.040374 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 24 21:16:40 docker34-91 kubelet[24165]: I1024 21:16:40.128789 24165 kubelet.go:2064] Container runtime status: Runtime Conditions: RuntimeReady=true reason: message:, NetworkReady=true reason: message:

Oct 24 21:16:40 docker34-91 kubelet[24165]: I1024 21:16:40.140634 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 24 21:16:40 docker34-91 kubelet[24165]: I1024 21:16:40.240851 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 24 21:16:40 docker34-91 kubelet[24165]: I1024 21:16:40.341125 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 24 21:16:40 docker34-91 kubelet[24165]: I1024 21:16:40.441471 24165 config.go:101] Looking for [api file], have seen map[api:{} file:{}]

Oct 24 21:16:40 docker34-91 kubelet[24165]: I1024 21:16:40.541781 24165 config.go:101] Looking for [api file], have seen map[api:{} file:{}]

Oct 24 21:16:40 docker34-91 kubelet[24165]: I1024 21:16:40.642070 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 24 21:16:40 docker34-91 kubelet[24165]: I1024 21:16:40.742347 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 24 21:16:40 docker34-91 kubelet[24165]: I1024 21:16:40.842562 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 24 21:16:40 docker34-91 kubelet[24165]: I1024 21:16:40.942867 24165 config.go:101] Looking for [api file], have seen map[api:{} file:{}]

Oct 24 21:16:41 docker34-91 kubelet[24165]: I1024 21:16:41.006656 24165 kubelet.go:1752] skipping pod synchronization - [PLEG is not healthy: pleg was last seen active 6m20.171705404s ago; threshold is 3m0s]

Oct 24 21:16:41 docker34-91 kubelet[24165]: I1024 21:16:41.043126 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 24 21:16:41 docker34-91 kubelet[24165]: I1024 21:16:41.143372 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 24 21:16:41 docker34-91 kubelet[24165]: I1024 21:16:41.243620 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 24 21:16:41 docker34-91 kubelet[24165]: I1024 21:16:41.343911 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 24 21:16:41 docker34-91 kubelet[24165]: I1024 21:16:41.444156 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 24 21:16:41 docker34-91 kubelet[24165]: I1024 21:16:41.544420 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 24 21:16:41 docker34-91 kubelet[24165]: I1024 21:16:41.644732 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 24 21:16:41 docker34-91 kubelet[24165]: I1024 21:16:41.745002 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 24 21:16:41 docker34-91 kubelet[24165]: I1024 21:16:41.845268 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 24 21:16:41 docker34-91 kubelet[24165]: I1024 21:16:41.945524 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 24 21:16:42 docker34-91 kubelet[24165]: I1024 21:16:42.045814 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

^C

[root@docker34-91 ~]# journalctl -u kubelet -f

-- Logs begin at Wed 2017-10-25 17:19:29 CST. --

Oct 27 10:22:35 docker34-91 kubelet[24165]: 00000000 6b 38 73 00 0a 0b 0a 02 76 31 12 05 45 76 65 6e |k8s.....v1..Even|

Oct 27 10:22:35 docker34-91 kubelet[24165]: 00000010 74 12 d3 03 0a 4f 0a 33 6c 64 74 65 73 74 2d 37 |t....O.3ldtest-7|

Oct 27 10:22:35 docker34-91 kubelet[24165]: 00000020 33 34 33 39 39 64 67 35 39 2d 33 33 38 32 38 37 |34399dg59-338287|

Oct 27 10:22:35 docker34-91 kubelet[24165]: 00000030 31 36 38 35 2d 78 32 36 70 30 2e 31 34 66 31 34 |1685-x26p0.14f14|

Oct 27 10:22:35 docker34-91 kubelet[24165]: 00000040 63 30 39 65 62 64 32 64 66 66 34 12 00 1a 0a 6c |c09ebd2dff4....l|

Oct 27 10:22:35 docker34-91 kubelet[24165]: 00000050 64 74 65 73 74 2d 30 30 35 22 00 2a 00 32 00 38 |dtest-005".*.2.8|

Oct 27 10:22:35 docker34-91 kubelet[24165]: 00000060 00 42 00 7a 00 12 6b 0a 03 50 6f 64 12 0a 6c 64 |.B.z..k..Pod..ld|

Oct 27 10:22:35 docker34-91 kubelet[24165]: 00000070 74 65 73 74 2d 30 30 35 1a 22 6c 64 74 65 73 74 |test-005."ldtest|

Oct 27 10:22:35 docker34-91 kubelet[24165]: 00000080 2d 37 33 34 33 39 39 64 67 35 39 2d 33 33 38 32 |-734399dg59-3382|

Oct 27 10:22:35 docker34-91 kubelet[24165]: 00000090 38 37 31 36 38 35 2d 78 32 36 70 30 22 24 61 35 |871685-x26p0"$a5|

Oct 27 10:23:02 docker34-91 kubelet[24165]: I1027 10:23:02.098922 24165 kubelet.go:2064] Container runtime status: Runtime Conditions: RuntimeReady=true reason: message:, NetworkReady=true reason: message:

Oct 27 10:23:02 docker34-91 kubelet[24165]: I1027 10:23:02.175027 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:02 docker34-91 kubelet[24165]: I1027 10:23:02.275290 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:02 docker34-91 kubelet[24165]: I1027 10:23:02.375594 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:02 docker34-91 kubelet[24165]: I1027 10:23:02.475872 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:02 docker34-91 kubelet[24165]: I1027 10:23:02.576140 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:02 docker34-91 kubelet[24165]: I1027 10:23:02.676412 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:02 docker34-91 kubelet[24165]: I1027 10:23:02.776613 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:02 docker34-91 kubelet[24165]: I1027 10:23:02.876855 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:02 docker34-91 kubelet[24165]: I1027 10:23:02.977126 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.000354 24165 status_manager.go:410] Status Manager: syncPod in syncbatch. pod UID: "a052cabc-bab9-11e7-92f6-3497f60062c3"

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.000509 24165 round_trippers.go:398] curl -k -v -XGET -H "Accept: application/vnd.kubernetes.protobuf, */*" -H "User-Agent: kubelet/v1.6.4 (linux/amd64) kubernetes/d6f4332" http://172.23.48.211:8080/api/v1/namespaces/ldtest-005/pods/ldtest-276aa6023f-1106740979-hbtcv

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.001753 24165 round_trippers.go:417] GET http://172.23.48.211:8080/api/v1/namespaces/ldtest-005/pods/ldtest-276aa6023f-1106740979-hbtcv 404 Not Found in 1 milliseconds

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.001768 24165 round_trippers.go:423] Response Headers:

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.001773 24165 round_trippers.go:426] Content-Type: application/vnd.kubernetes.protobuf

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.001776 24165 round_trippers.go:426] Date: Fri, 27 Oct 2017 02:23:03 GMT

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.001780 24165 round_trippers.go:426] Content-Length: 154

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.001838 24165 request.go:989] Response Body:

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000000 6b 38 73 00 0a 0c 0a 02 76 31 12 06 53 74 61 74 |k8s.....v1..Stat|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000010 75 73 12 81 01 0a 04 0a 00 12 00 12 07 46 61 69 |us...........Fai|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000020 6c 75 72 65 1a 33 70 6f 64 73 20 22 6c 64 74 65 |lure.3pods "ldte|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000030 73 74 2d 32 37 36 61 61 36 30 32 33 66 2d 31 31 |st-276aa6023f-11|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000040 30 36 37 34 30 39 37 39 2d 68 62 74 63 76 22 20 |06740979-hbtcv" |

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000050 6e 6f 74 20 66 6f 75 6e 64 22 08 4e 6f 74 46 6f |not found".NotFo|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000060 75 6e 64 2a 2e 0a 22 6c 64 74 65 73 74 2d 32 37 |und*.."ldtest-27|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000070 36 61 61 36 30 32 33 66 2d 31 31 30 36 37 34 30 |6aa6023f-1106740|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000080 39 37 39 2d 68 62 74 63 76 12 00 1a 04 70 6f 64 |979-hbtcv....pod|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000090 73 28 00 30 94 03 1a 00 22 00 |s(.0....".|

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.001885 24165 status_manager.go:425] Pod "ldtest-276aa6023f-1106740979-hbtcv" (a052cabc-bab9-11e7-92f6-3497f60062c3) does not exist on the server

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.001900 24165 status_manager.go:410] Status Manager: syncPod in syncbatch. pod UID: "a584c63e-bab7-11e7-92f6-3497f60062c3"

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.001946 24165 round_trippers.go:398] curl -k -v -XGET -H "Accept: application/vnd.kubernetes.protobuf, */*" -H "User-Agent: kubelet/v1.6.4 (linux/amd64) kubernetes/d6f4332" http://172.23.48.211:8080/api/v1/namespaces/ldtest-005/pods/ldtest-734399dg59-3382871685-x26p0

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.002559 24165 round_trippers.go:417] GET http://172.23.48.211:8080/api/v1/namespaces/ldtest-005/pods/ldtest-734399dg59-3382871685-x26p0 404 Not Found in 0 milliseconds

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.002569 24165 round_trippers.go:423] Response Headers:

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.002573 24165 round_trippers.go:426] Content-Type: application/vnd.kubernetes.protobuf

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.002577 24165 round_trippers.go:426] Date: Fri, 27 Oct 2017 02:23:03 GMT

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.002580 24165 round_trippers.go:426] Content-Length: 154

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.002627 24165 request.go:989] Response Body:

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000000 6b 38 73 00 0a 0c 0a 02 76 31 12 06 53 74 61 74 |k8s.....v1..Stat|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000010 75 73 12 81 01 0a 04 0a 00 12 00 12 07 46 61 69 |us...........Fai|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000020 6c 75 72 65 1a 33 70 6f 64 73 20 22 6c 64 74 65 |lure.3pods "ldte|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000030 73 74 2d 37 33 34 33 39 39 64 67 35 39 2d 33 33 |st-734399dg59-33|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000040 38 32 38 37 31 36 38 35 2d 78 32 36 70 30 22 20 |82871685-x26p0" |

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000050 6e 6f 74 20 66 6f 75 6e 64 22 08 4e 6f 74 46 6f |not found".NotFo|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000060 75 6e 64 2a 2e 0a 22 6c 64 74 65 73 74 2d 37 33 |und*.."ldtest-73|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000070 34 33 39 39 64 67 35 39 2d 33 33 38 32 38 37 31 |4399dg59-3382871|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000080 36 38 35 2d 78 32 36 70 30 12 00 1a 04 70 6f 64 |685-x26p0....pod|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000090 73 28 00 30 94 03 1a 00 22 00 |s(.0....".|

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.002659 24165 status_manager.go:425] Pod "ldtest-734399dg59-3382871685-x26p0" (a584c63e-bab7-11e7-92f6-3497f60062c3) does not exist on the server

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.002668 24165 status_manager.go:410] Status Manager: syncPod in syncbatch. pod UID: "2727277f-bab3-11e7-92f6-3497f60062c3"

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.002711 24165 round_trippers.go:398] curl -k -v -XGET -H "User-Agent: kubelet/v1.6.4 (linux/amd64) kubernetes/d6f4332" -H "Accept: application/vnd.kubernetes.protobuf, */*" http://172.23.48.211:8080/api/v1/namespaces/ldtest-005/pods/ldtest-4bc7922c25-2238154508-xt94x

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.003318 24165 round_trippers.go:417] GET http://172.23.48.211:8080/api/v1/namespaces/ldtest-005/pods/ldtest-4bc7922c25-2238154508-xt94x 404 Not Found in 0 milliseconds

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.003328 24165 round_trippers.go:423] Response Headers:

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.003332 24165 round_trippers.go:426] Date: Fri, 27 Oct 2017 02:23:03 GMT

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.003336 24165 round_trippers.go:426] Content-Length: 154

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.003339 24165 round_trippers.go:426] Content-Type: application/vnd.kubernetes.protobuf

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.003379 24165 request.go:989] Response Body:

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000000 6b 38 73 00 0a 0c 0a 02 76 31 12 06 53 74 61 74 |k8s.....v1..Stat|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000010 75 73 12 81 01 0a 04 0a 00 12 00 12 07 46 61 69 |us...........Fai|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000020 6c 75 72 65 1a 33 70 6f 64 73 20 22 6c 64 74 65 |lure.3pods "ldte|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000030 73 74 2d 34 62 63 37 39 32 32 63 32 35 2d 32 32 |st-4bc7922c25-22|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000040 33 38 31 35 34 35 30 38 2d 78 74 39 34 78 22 20 |38154508-xt94x" |

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000050 6e 6f 74 20 66 6f 75 6e 64 22 08 4e 6f 74 46 6f |not found".NotFo|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000060 75 6e 64 2a 2e 0a 22 6c 64 74 65 73 74 2d 34 62 |und*.."ldtest-4b|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000070 63 37 39 32 32 63 32 35 2d 32 32 33 38 31 35 34 |c7922c25-2238154|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000080 35 30 38 2d 78 74 39 34 78 12 00 1a 04 70 6f 64 |508-xt94x....pod|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000090 73 28 00 30 94 03 1a 00 22 00 |s(.0....".|

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.003411 24165 status_manager.go:425] Pod "ldtest-4bc7922c25-2238154508-xt94x" (2727277f-bab3-11e7-92f6-3497f60062c3) does not exist on the server

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.003423 24165 status_manager.go:410] Status Manager: syncPod in syncbatch. pod UID: "43dd5201-bab4-11e7-92f6-3497f60062c3"

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.003482 24165 round_trippers.go:398] curl -k -v -XGET -H "Accept: application/vnd.kubernetes.protobuf, */*" -H "User-Agent: kubelet/v1.6.4 (linux/amd64) kubernetes/d6f4332" http://172.23.48.211:8080/api/v1/namespaces/ldtest-005/pods/ldtest-g02c441308-3753936377-d6q69

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.004051 24165 round_trippers.go:417] GET http://172.23.48.211:8080/api/v1/namespaces/ldtest-005/pods/ldtest-g02c441308-3753936377-d6q69 404 Not Found in 0 milliseconds

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.004059 24165 round_trippers.go:423] Response Headers:

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.004062 24165 round_trippers.go:426] Content-Type: application/vnd.kubernetes.protobuf

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.004066 24165 round_trippers.go:426] Date: Fri, 27 Oct 2017 02:23:03 GMT

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.004069 24165 round_trippers.go:426] Content-Length: 154

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.004115 24165 request.go:989] Response Body:

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000000 6b 38 73 00 0a 0c 0a 02 76 31 12 06 53 74 61 74 |k8s.....v1..Stat|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000010 75 73 12 81 01 0a 04 0a 00 12 00 12 07 46 61 69 |us...........Fai|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000020 6c 75 72 65 1a 33 70 6f 64 73 20 22 6c 64 74 65 |lure.3pods "ldte|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000030 73 74 2d 67 30 32 63 34 34 31 33 30 38 2d 33 37 |st-g02c441308-37|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000040 35 33 39 33 36 33 37 37 2d 64 36 71 36 39 22 20 |53936377-d6q69" |

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000050 6e 6f 74 20 66 6f 75 6e 64 22 08 4e 6f 74 46 6f |not found".NotFo|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000060 75 6e 64 2a 2e 0a 22 6c 64 74 65 73 74 2d 67 30 |und*.."ldtest-g0|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000070 32 63 34 34 31 33 30 38 2d 33 37 35 33 39 33 36 |2c441308-3753936|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000080 33 37 37 2d 64 36 71 36 39 12 00 1a 04 70 6f 64 |377-d6q69....pod|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000090 73 28 00 30 94 03 1a 00 22 00 |s(.0....".|

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.004142 24165 status_manager.go:425] Pod "ldtest-g02c441308-3753936377-d6q69" (43dd5201-bab4-11e7-92f6-3497f60062c3) does not exist on the server

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.004148 24165 status_manager.go:410] Status Manager: syncPod in syncbatch. pod UID: "8fd9d66f-bab7-11e7-92f6-3497f60062c3"

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.004195 24165 round_trippers.go:398] curl -k -v -XGET -H "Accept: application/vnd.kubernetes.protobuf, */*" -H "User-Agent: kubelet/v1.6.4 (linux/amd64) kubernetes/d6f4332" http://172.23.48.211:8080/api/v1/namespaces/ldtest-005/pods/ldtest-cf2eg79b08-3660220702-x0j2j

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.004752 24165 round_trippers.go:417] GET http://172.23.48.211:8080/api/v1/namespaces/ldtest-005/pods/ldtest-cf2eg79b08-3660220702-x0j2j 404 Not Found in 0 milliseconds

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.004761 24165 round_trippers.go:423] Response Headers:

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.004765 24165 round_trippers.go:426] Date: Fri, 27 Oct 2017 02:23:03 GMT

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.004769 24165 round_trippers.go:426] Content-Length: 154

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.004773 24165 round_trippers.go:426] Content-Type: application/vnd.kubernetes.protobuf

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.004812 24165 request.go:989] Response Body:

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000000 6b 38 73 00 0a 0c 0a 02 76 31 12 06 53 74 61 74 |k8s.....v1..Stat|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000010 75 73 12 81 01 0a 04 0a 00 12 00 12 07 46 61 69 |us...........Fai|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000020 6c 75 72 65 1a 33 70 6f 64 73 20 22 6c 64 74 65 |lure.3pods "ldte|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000030 73 74 2d 63 66 32 65 67 37 39 62 30 38 2d 33 36 |st-cf2eg79b08-36|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000040 36 30 32 32 30 37 30 32 2d 78 30 6a 32 6a 22 20 |60220702-x0j2j" |

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000050 6e 6f 74 20 66 6f 75 6e 64 22 08 4e 6f 74 46 6f |not found".NotFo|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000060 75 6e 64 2a 2e 0a 22 6c 64 74 65 73 74 2d 63 66 |und*.."ldtest-cf|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000070 32 65 67 37 39 62 30 38 2d 33 36 36 30 32 32 30 |2eg79b08-3660220|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000080 37 30 32 2d 78 30 6a 32 6a 12 00 1a 04 70 6f 64 |702-x0j2j....pod|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000090 73 28 00 30 94 03 1a 00 22 00 |s(.0....".|

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.004841 24165 status_manager.go:425] Pod "ldtest-cf2eg79b08-3660220702-x0j2j" (8fd9d66f-bab7-11e7-92f6-3497f60062c3) does not exist on the server

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.004853 24165 status_manager.go:410] Status Manager: syncPod in syncbatch. pod UID: "eb5a5f4a-baba-11e7-92f6-3497f60062c3"

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.004921 24165 round_trippers.go:398] curl -k -v -XGET -H "Accept: application/vnd.kubernetes.protobuf, */*" -H "User-Agent: kubelet/v1.6.4 (linux/amd64) kubernetes/d6f4332" http://172.23.48.211:8080/api/v1/namespaces/ldtest-005/pods/ldtest-9b47680d12-2536408624-jhp18

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.005436 24165 round_trippers.go:417] GET http://172.23.48.211:8080/api/v1/namespaces/ldtest-005/pods/ldtest-9b47680d12-2536408624-jhp18 404 Not Found in 0 milliseconds

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.005446 24165 round_trippers.go:423] Response Headers:

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.005450 24165 round_trippers.go:426] Content-Type: application/vnd.kubernetes.protobuf

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.005454 24165 round_trippers.go:426] Date: Fri, 27 Oct 2017 02:23:03 GMT

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.005457 24165 round_trippers.go:426] Content-Length: 154

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.005499 24165 request.go:989] Response Body:

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000000 6b 38 73 00 0a 0c 0a 02 76 31 12 06 53 74 61 74 |k8s.....v1..Stat|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000010 75 73 12 81 01 0a 04 0a 00 12 00 12 07 46 61 69 |us...........Fai|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000020 6c 75 72 65 1a 33 70 6f 64 73 20 22 6c 64 74 65 |lure.3pods "ldte|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000030 73 74 2d 39 62 34 37 36 38 30 64 31 32 2d 32 35 |st-9b47680d12-25|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000040 33 36 34 30 38 36 32 34 2d 6a 68 70 31 38 22 20 |36408624-jhp18" |

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000050 6e 6f 74 20 66 6f 75 6e 64 22 08 4e 6f 74 46 6f |not found".NotFo|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000060 75 6e 64 2a 2e 0a 22 6c 64 74 65 73 74 2d 39 62 |und*.."ldtest-9b|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000070 34 37 36 38 30 64 31 32 2d 32 35 33 36 34 30 38 |47680d12-2536408|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000080 36 32 34 2d 6a 68 70 31 38 12 00 1a 04 70 6f 64 |624-jhp18....pod|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000090 73 28 00 30 94 03 1a 00 22 00 |s(.0....".|

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.005526 24165 status_manager.go:425] Pod "ldtest-9b47680d12-2536408624-jhp18" (eb5a5f4a-baba-11e7-92f6-3497f60062c3) does not exist on the server

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.005533 24165 status_manager.go:410] Status Manager: syncPod in syncbatch. pod UID: "2db95639-bab5-11e7-92f6-3497f60062c3"

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.005588 24165 round_trippers.go:398] curl -k -v -XGET -H "Accept: application/vnd.kubernetes.protobuf, */*" -H "User-Agent: kubelet/v1.6.4 (linux/amd64) kubernetes/d6f4332" http://172.23.48.211:8080/api/v1/namespaces/ldtest-005/pods/ldtest-5f8ba1eag0-2191624653-dm374

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.006150 24165 round_trippers.go:417] GET http://172.23.48.211:8080/api/v1/namespaces/ldtest-005/pods/ldtest-5f8ba1eag0-2191624653-dm374 404 Not Found in 0 milliseconds

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.006176 24165 round_trippers.go:423] Response Headers:

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.006182 24165 round_trippers.go:426] Date: Fri, 27 Oct 2017 02:23:03 GMT

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.006189 24165 round_trippers.go:426] Content-Length: 154

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.006195 24165 round_trippers.go:426] Content-Type: application/vnd.kubernetes.protobuf

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.006251 24165 request.go:989] Response Body:

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000000 6b 38 73 00 0a 0c 0a 02 76 31 12 06 53 74 61 74 |k8s.....v1..Stat|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000010 75 73 12 81 01 0a 04 0a 00 12 00 12 07 46 61 69 |us...........Fai|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000020 6c 75 72 65 1a 33 70 6f 64 73 20 22 6c 64 74 65 |lure.3pods "ldte|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000030 73 74 2d 35 66 38 62 61 31 65 61 67 30 2d 32 31 |st-5f8ba1eag0-21|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000040 39 31 36 32 34 36 35 33 2d 64 6d 33 37 34 22 20 |91624653-dm374" |

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000050 6e 6f 74 20 66 6f 75 6e 64 22 08 4e 6f 74 46 6f |not found".NotFo|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000060 75 6e 64 2a 2e 0a 22 6c 64 74 65 73 74 2d 35 66 |und*.."ldtest-5f|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000070 38 62 61 31 65 61 67 30 2d 32 31 39 31 36 32 34 |8ba1eag0-2191624|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000080 36 35 33 2d 64 6d 33 37 34 12 00 1a 04 70 6f 64 |653-dm374....pod|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000090 73 28 00 30 94 03 1a 00 22 00 |s(.0....".|

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.006297 24165 status_manager.go:425] Pod "ldtest-5f8ba1eag0-2191624653-dm374" (2db95639-bab5-11e7-92f6-3497f60062c3) does not exist on the server

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.006330 24165 status_manager.go:410] Status Manager: syncPod in syncbatch. pod UID: "ecf58d7f-bab2-11e7-92f6-3497f60062c3"

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.006421 24165 round_trippers.go:398] curl -k -v -XGET -H "Accept: application/vnd.kubernetes.protobuf, */*" -H "User-Agent: kubelet/v1.6.4 (linux/amd64) kubernetes/d6f4332" http://172.23.48.211:8080/api/v1/namespaces/ldtest-005/pods/ldtest-0fe4761ce1-763135991-2gv5x

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.006983 24165 round_trippers.go:417] GET http://172.23.48.211:8080/api/v1/namespaces/ldtest-005/pods/ldtest-0fe4761ce1-763135991-2gv5x 404 Not Found in 0 milliseconds

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.006995 24165 round_trippers.go:423] Response Headers:

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.007001 24165 round_trippers.go:426] Content-Type: application/vnd.kubernetes.protobuf

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.007007 24165 round_trippers.go:426] Date: Fri, 27 Oct 2017 02:23:03 GMT

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.007014 24165 round_trippers.go:426] Content-Length: 151

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.007064 24165 request.go:989] Response Body:

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000000 6b 38 73 00 0a 0c 0a 02 76 31 12 06 53 74 61 74 |k8s.....v1..Stat|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000010 75 73 12 7f 0a 04 0a 00 12 00 12 07 46 61 69 6c |us..........Fail|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000020 75 72 65 1a 32 70 6f 64 73 20 22 6c 64 74 65 73 |ure.2pods "ldtes|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000030 74 2d 30 66 65 34 37 36 31 63 65 31 2d 37 36 33 |t-0fe4761ce1-763|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000040 31 33 35 39 39 31 2d 32 67 76 35 78 22 20 6e 6f |135991-2gv5x" no|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000050 74 20 66 6f 75 6e 64 22 08 4e 6f 74 46 6f 75 6e |t found".NotFoun|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000060 64 2a 2d 0a 21 6c 64 74 65 73 74 2d 30 66 65 34 |d*-.!ldtest-0fe4|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000070 37 36 31 63 65 31 2d 37 36 33 31 33 35 39 39 31 |761ce1-763135991|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000080 2d 32 67 76 35 78 12 00 1a 04 70 6f 64 73 28 00 |-2gv5x....pods(.|

Oct 27 10:23:03 docker34-91 kubelet[24165]: 00000090 30 94 03 1a 00 22 00 |0....".|

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.007106 24165 status_manager.go:425] Pod "ldtest-0fe4761ce1-763135991-2gv5x" (ecf58d7f-bab2-11e7-92f6-3497f60062c3) does not exist on the server

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.077334 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.177546 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.277737 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.377939 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.478169 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.578369 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.603649 24165 eviction_manager.go:197] eviction manager: synchronize housekeeping

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.678573 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.682080 24165 summary.go:389] Missing default interface "eth0" for node:172.23.34.91

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.682132 24165 summary.go:389] Missing default interface "eth0" for pod:kube-system_kube-proxy-qcft5

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.682176 24165 helpers.go:744] eviction manager: observations: signal=imagefs.available, available: 515801344Ki, capacity: 511750Mi, time: 2017-10-27 10:22:56.499173632 +0800 CST

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.682197 24165 helpers.go:744] eviction manager: observations: signal=imagefs.inodesFree, available: 523222251, capacity: 500Mi, time: 2017-10-27 10:22:56.499173632 +0800 CST

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.682203 24165 helpers.go:746] eviction manager: observations: signal=allocatableMemory.available, available: 65544340Ki, capacity: 65581868Ki

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.682207 24165 helpers.go:744] eviction manager: observations: signal=memory.available, available: 57973412Ki, capacity: 65684268Ki, time: 2017-10-27 10:22:56.499173632 +0800 CST

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.682213 24165 helpers.go:744] eviction manager: observations: signal=nodefs.available, available: 99175128Ki, capacity: 102350Mi, time: 2017-10-27 10:22:56.499173632 +0800 CST

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.682218 24165 helpers.go:744] eviction manager: observations: signal=nodefs.inodesFree, available: 104818019, capacity: 100Mi, time: 2017-10-27 10:22:56.499173632 +0800 CST

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.682233 24165 eviction_manager.go:292] eviction manager: no resources are starved

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.778792 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.879040 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:03 docker34-91 kubelet[24165]: I1027 10:23:03.979304 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:04 docker34-91 kubelet[24165]: I1027 10:23:04.079534 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:04 docker34-91 kubelet[24165]: I1027 10:23:04.179753 24165 config.go:101] Looking for [api file], have seen map[api:{} file:{}]

Oct 27 10:23:04 docker34-91 kubelet[24165]: I1027 10:23:04.280026 24165 config.go:101] Looking for [api file], have seen map[api:{} file:{}]

Oct 27 10:23:04 docker34-91 kubelet[24165]: I1027 10:23:04.380246 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:04 docker34-91 kubelet[24165]: I1027 10:23:04.480450 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:04 docker34-91 kubelet[24165]: I1027 10:23:04.580695 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:04 docker34-91 kubelet[24165]: I1027 10:23:04.680957 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:04 docker34-91 kubelet[24165]: I1027 10:23:04.781224 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:04 docker34-91 kubelet[24165]: I1027 10:23:04.881418 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:04 docker34-91 kubelet[24165]: I1027 10:23:04.981643 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.081882 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.182810 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.283410 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.383626 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.483942 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.584211 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.684460 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.784699 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.884949 24165 config.go:101] Looking for [api file], have seen map[file:{} api:{}]

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.960855 24165 factory.go:115] Factory "docker" was unable to handle container "/system.slice/data-docker-overlay-c0d3c4b3834cfe9f12cd5c35345cab9c8e71bb64c689c8aea7a458c119a5a54e-merged.mount"

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.960885 24165 factory.go:108] Factory "systemd" can handle container "/system.slice/data-docker-overlay-c0d3c4b3834cfe9f12cd5c35345cab9c8e71bb64c689c8aea7a458c119a5a54e-merged.mount", but ignoring.

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.960906 24165 manager.go:867] ignoring container "/system.slice/data-docker-overlay-c0d3c4b3834cfe9f12cd5c35345cab9c8e71bb64c689c8aea7a458c119a5a54e-merged.mount"

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.960912 24165 factory.go:115] Factory "docker" was unable to handle container "/system.slice/data-docker-overlay-ce9656ff9d3cd03baaf93e42d0874377fa37bfde6c9353b3ba954c90bf4332f3-merged.mount"

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.960919 24165 factory.go:108] Factory "systemd" can handle container "/system.slice/data-docker-overlay-ce9656ff9d3cd03baaf93e42d0874377fa37bfde6c9353b3ba954c90bf4332f3-merged.mount", but ignoring.

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.960926 24165 manager.go:867] ignoring container "/system.slice/data-docker-overlay-ce9656ff9d3cd03baaf93e42d0874377fa37bfde6c9353b3ba954c90bf4332f3-merged.mount"

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.960931 24165 factory.go:115] Factory "docker" was unable to handle container "/system.slice/data-docker-overlay-b3600c0fe81445773b9241c5d1da8b1f97612d0a235f8b32139478a5717f79e1-merged.mount"

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.960937 24165 factory.go:108] Factory "systemd" can handle container "/system.slice/data-docker-overlay-b3600c0fe81445773b9241c5d1da8b1f97612d0a235f8b32139478a5717f79e1-merged.mount", but ignoring.

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.960944 24165 manager.go:867] ignoring container "/system.slice/data-docker-overlay-b3600c0fe81445773b9241c5d1da8b1f97612d0a235f8b32139478a5717f79e1-merged.mount"

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.960949 24165 factory.go:115] Factory "docker" was unable to handle container "/system.slice/data-docker-overlay-ed2fe0d57c56cf6b051e1bda1ca0185ceef4756b1a8f9af4c19f4e512bcc60f4-merged.mount"

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.960955 24165 factory.go:108] Factory "systemd" can handle container "/system.slice/data-docker-overlay-ed2fe0d57c56cf6b051e1bda1ca0185ceef4756b1a8f9af4c19f4e512bcc60f4-merged.mount", but ignoring.

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.960979 24165 manager.go:867] ignoring container "/system.slice/data-docker-overlay-ed2fe0d57c56cf6b051e1bda1ca0185ceef4756b1a8f9af4c19f4e512bcc60f4-merged.mount"

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.960984 24165 factory.go:115] Factory "docker" was unable to handle container "/system.slice/data-docker-overlay-0ba6483a0117c539493cd269be9f87d31d1d61aa813e7e0381c5f5d8b0623275-merged.mount"

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.960990 24165 factory.go:108] Factory "systemd" can handle container "/system.slice/data-docker-overlay-0ba6483a0117c539493cd269be9f87d31d1d61aa813e7e0381c5f5d8b0623275-merged.mount", but ignoring.

Oct 27 10:23:05 docker34-91 kubelet[24165]: I1027 10:23:05.960997 24165 manager.go:867] ignoring container "/system.slice/data-docker-overlay-0ba6483a0117c539493cd269be9f87d31d1d61aa813e7e0381c5f5d8b0623275-merged.mount"

同様の問題をヒット:

Oct 28 09:15:38 ip-10-72-17-119.us-west-2.compute.internal kubelet[3299]: E1028 09:15:38.711430 3299 pod_workers.go:182] Error syncing pod 7d3b94f3-afa7-11e7-aaec-06936c368d26 ("pickup-566929041-bn8t9_staging(7d3b94f3-afa7-11e7-aaec-06936c368d26)"), skipping: rpc error: code = 4 desc = context deadline exceeded

Oct 28 09:15:51 ip-10-72-17-119.us-west-2.compute.internal kubelet[3299]: E1028 09:15:51.439135 3299 kuberuntime_manager.go:843] PodSandboxStatus of sandbox "9c1c1f2d4a9d277a41a97593c330f41e00ca12f3ad858c19f61fd155d18d795e" for pod "pickup-566929041-bn8t9_staging(7d3b94f3-afa7-11e7-aaec-06936c368d26)" error: rpc error: code = 4 desc = context deadline exceeded

Oct 28 09:15:51 ip-10-72-17-119.us-west-2.compute.internal kubelet[3299]: E1028 09:15:51.439188 3299 generic.go:241] PLEG: Ignoring events for pod pickup-566929041-bn8t9/staging: rpc error: code = 4 desc = context deadline exceeded

Oct 28 09:15:51 ip-10-72-17-119.us-west-2.compute.internal kubelet[3299]: E1028 09:15:51.711168 3299 pod_workers.go:182] Error syncing pod 7d3b94f3-afa7-11e7-aaec-06936c368d26 ("pickup-566929041-bn8t9_staging(7d3b94f3-afa7-11e7-aaec-06936c368d26)"), skipping: rpc error: code = 4 desc = context deadline exceeded

Oct 28 09:16:03 ip-10-72-17-119.us-west-2.compute.internal kubelet[3299]: E1028 09:16:03.711164 3299 pod_workers.go:182] Error syncing pod 7d3b94f3-afa7-11e7-aaec-06936c368d26 ("pickup-566929041-bn8t9_staging(7d3b94f3-afa7-11e7-aaec-06936c368d26)"), skipping: rpc error: code = 4 desc = context deadline exceeded

Oct 28 09:16:18 ip-10-72-17-119.us-west-2.compute.internal kubelet[3299]: E1028 09:16:18.715381 3299 pod_workers.go:182] Error syncing pod 7d3b94f3-afa7-11e7-aaec-06936c368d26 ("pickup-566929041-bn8t9_staging(7d3b94f3-afa7-11e7-aaec-06936c368d26)"), skipping: rpc error: code = 4 desc = context deadline exceeded

Oct 28 09:16:33 ip-10-72-17-119.us-west-2.compute.internal kubelet[3299]: E1028 09:16:33.711198 3299 pod_workers.go:182] Error syncing pod 7d3b94f3-afa7-11e7-aaec-06936c368d26 ("pickup-566929041-bn8t9_staging(7d3b94f3-afa7-11e7-aaec-06936c368d26)"), skipping: rpc error: code = 4 desc = context deadline exceeded

Oct 28 09:16:46 ip-10-72-17-119.us-west-2.compute.internal kubelet[3299]: E1028 09:16:46.712983 3299 pod_workers.go:182] Error syncing pod 7d3b94f3-afa7-11e7-aaec-06936c368d26 ("pickup-566929041-bn8t9_staging(7d3b94f3-afa7-11e7-aaec-06936c368d26)"), skipping: rpc error: code = 4 desc = context deadline exceeded

Oct 28 09:16:51 ip-10-72-17-119.us-west-2.compute.internal kubelet[3299]: I1028 09:16:51.711142 3299 kubelet.go:1820] skipping pod synchronization - [PLEG is not healthy: pleg was last seen active 3m0.31269053s ago; threshold is 3m0s]

Oct 28 09:16:56 ip-10-72-17-119.us-west-2.compute.internal kubelet[3299]: I1028 09:16:56.711341 3299 kubelet.go:1820] skipping pod synchronization - [PLEG is not healthy: pleg was last seen active 3m5.312886434s ago; threshold is 3m0s]

Oct 28 09:17:01 ip-10-72-17-119.us-west-2.compute.internal kubelet[3299]: I1028 09:17:01.351771 3299 kubelet_node_status.go:734] Node became not ready: {Type:Ready Status:False LastHeartbeatTime:2017-10-28 09:17:01.35173325 +0000 UTC LastTransitionTime:2017-10-28 09:17:01.35173325 +0000 UTC Reason:KubeletNotReady Message:PLEG is not healthy: pleg was last seen active 3m9.95330596s ago; threshold is 3m0s}

Oct 28 09:17:01 ip-10-72-17-119.us-west-2.compute.internal kubelet[3299]: I1028 09:17:01.711552 3299 kubelet.go:1820] skipping pod synchronization - [PLEG is not healthy: pleg was last seen active 3m10.31309378s ago; threshold is 3m0s]

Oct 28 09:17:06 ip-10-72-17-119.us-west-2.compute.internal kubelet[3299]: I1028 09:17:06.711871 3299 kubelet.go:1820] skipping pod synchronization - [PLEG is not healthy: pleg was last seen active 3m15.313406671s ago; threshold is 3m0s]

Oct 28 09:17:11 ip-10-72-17-119.us-west-2.compute.internal kubelet[3299]: I1028 09:17:11.712162 3299 kubelet.go:1820] skipping pod synchronization - [PLEG is not healthy: pleg was last seen active 3m20.313691126s ago; threshold is 3m0s]

Oct 28 09:17:12 ip-10-72-17-119.us-west-2.compute.internal kubelet[3299]: 2017/10/28 09:17:12 transport: http2Server.HandleStreams failed to read frame: read unix /var/run/dockershim.sock->@: use of closed network connection

Oct 28 09:17:12 ip-10-72-17-119.us-west-2.compute.internal kubelet[3299]: 2017/10/28 09:17:12 transport: http2Client.notifyError got notified that the client transport was broken EOF.

Oct 28 09:17:12 ip-10-72-17-119.us-west-2.compute.internal kubelet[3299]: 2017/10/28 09:17:12 grpc: addrConn.resetTransport failed to create client transport: connection error: desc = "transport: dial unix /var/run/dockershim.sock: connect: no such file or directory"; Reconnecting to {/var/run/dockershim.sock <nil>}

Oct 28 09:17:12 ip-10-72-17-119.us-west-2.compute.internal kubelet[3299]: E1028 09:17:12.556535 3299 kuberuntime_manager.go:843] PodSandboxStatus of sandbox "9c1c1f2d4a9d277a41a97593c330f41e00ca12f3ad858c19f61fd155d18d795e" for pod "pickup-566929041-bn8t9_staging(7d3b94f3-afa7-11e7-aaec-06936c368d26)" error: rpc error: code = 13 desc = transport is closing

これらのメッセージの後、 kubeletは再起動ループに入りました。

Oct 28 09:17:12 ip-10-72-17-119.us-west-2.compute.internal systemd[1]: kube-kubelet.service: Main process exited, code=exited, status=1/FAILURE

Oct 28 09:18:42 ip-10-72-17-119.us-west-2.compute.internal systemd[1]: kube-kubelet.service: State 'stop-final-sigterm' timed out. Killing.

Oct 28 09:18:42 ip-10-72-17-119.us-west-2.compute.internal systemd[1]: kube-kubelet.service: Killing process 1661 (calico) with signal SIGKILL.

Oct 28 09:20:12 ip-10-72-17-119.us-west-2.compute.internal systemd[1]: kube-kubelet.service: Processes still around after final SIGKILL. Entering failed mode.

Oct 28 09:20:12 ip-10-72-17-119.us-west-2.compute.internal systemd[1]: Stopped Kubernetes Kubelet.

Oct 28 09:20:12 ip-10-72-17-119.us-west-2.compute.internal systemd[1]: kube-kubelet.service: Unit entered failed state.

Oct 28 09:20:12 ip-10-72-17-119.us-west-2.compute.internal systemd[1]: kube-kubelet.service: Failed with result 'exit-code'.

最後のメッセージは次のとおりです。Dockerの問題のようです。

Oct 28 09:17:12 ip-10-72-17-119.us-west-2.compute.internal kubelet[3299]: 2017/10/28 09:17:12 transport: http2Server.HandleStreams failed to read frame: read unix /var/run/dockershim.sock->@: use of closed network connection

Oct 28 09:17:12 ip-10-72-17-119.us-west-2.compute.internal kubelet[3299]: 2017/10/28 09:17:12 transport: http2Client.notifyError got notified that the client transport was broken EOF.

Oct 28 09:17:12 ip-10-72-17-119.us-west-2.compute.internal kubelet[3299]: 2017/10/28 09:17:12 grpc: addrConn.resetTransport failed to create client transport: connection error: desc = "transport: dial unix /var/run/dockershim.sock: connect: no such file or directory"; Reconnecting to {/var/run/dockershim.sock <nil>}

最後のメッセージはdockershimからです。 これらのログも非常に役立ちます。

こんにちは、Kubernetes 1.7.10、Kops @ AWSに基づいており、CalicoとCoreOSを使用しています。

同じPLEGの問題があります

Ready False KubeletNotReady PLEG is not healthy: pleg was last seen active 3m29.396986143s ago; threshold is 3m0s

私たちが抱えている唯一の追加の問題は、最近特に1.7.8以降で再デプロイするときに発生すると思います。たとえば、新しいバージョンのアプリを持ってきて、古いレプリカセットがダウンするようにすると、新しいレプリカセットが一緒にスピンされます。ポッド、以前のデプロイメントバージョンのポッドは、「終了」状態のままになります。

次に、手動でforce kill them

同じPLEGの問題がありますk8s1.8.1

+1

1.6.9

Docker1.12.6を使用

+1

1.8.2

+1

1.6.0

- 1.8.4

そしてより多くの質問:

- そうです、CPUとメモリはほぼ100%でした。 しかし、私の質問は、ノードの準備が長いためにポッドが他のノードに割り当てられないのはなぜですか?

+1ノードがNotReady状態になることは、Kubernets 1.8.5にアップグレードした後、過去2日間でほぼ一貫して発生していました。 私にとっての問題は、クラスターオートスケーラーをアップグレードしなかったことだと思います。 オートスケーラーを1.03(ヘルム0.3.0)にアップグレードした後、「NotReady」状態のノードは表示されません。 再び安定したクラスターがあるようです。

- kops:1.8.0

- kubectl:1.8.5

- ヘルム:2.7.2

- cluster-autoscaler:v0.6.0 ---> 1.03にアップグレード(ヘルム0.3.0)

港湾労働者がぶら下がっていても、ペストは非アクティブであってはなりません

ここでも同じ、1.8.5

低バージョンから更新せず、空から作成します。

リソースは十分です

記憶

# free -mg

total used free shared buff/cache available

Mem: 15 2 8 0 5 12

Swap: 15 0 15

上

top - 04:34:39 up 24 days, 6:23, 2 users, load average: 31.56, 83.38, 66.29

Tasks: 432 total, 5 running, 427 sleeping, 0 stopped, 0 zombie

%Cpu(s): 9.2 us, 1.9 sy, 0.0 ni, 87.5 id, 1.3 wa, 0.0 hi, 0.1 si, 0.0 st

KiB Mem : 16323064 total, 8650144 free, 2417236 used, 5255684 buff/cache

KiB Swap: 16665596 total, 16646344 free, 19252 used. 12595460 avail Mem

PID USER PR NI VIRT RES SHR S %CPU %MEM TIME+ COMMAND

31905 root 20 0 1622320 194096 51280 S 14.9 1.2 698:10.66 kubelet

19402 root 20 0 12560 9696 1424 R 10.3 0.1 442:05.00 memtester

2626 root 20 0 12560 9660 1392 R 9.6 0.1 446:41.38 memtester

8680 root 20 0 12560 9660 1396 R 9.6 0.1 444:34.38 memtester

15004 root 20 0 12560 9704 1432 R 9.6 0.1 443:04.98 memtester

1663 root 20 0 8424940 424912 20556 S 4.6 2.6 2809:24 dockerd

409 root 20 0 49940 37068 20648 S 2.3 0.2 144:03.37 calico-felix

551 root 20 0 631788 20952 11824 S 1.3 0.1 100:36.78 costor

9527 root 20 0 10.529g 24800 13612 S 1.0 0.2 3:43.55 etcd

2608 root 20 0 421936 6040 3288 S 0.7 0.0 31:29.78 containerd-shim

4136 root 20 0 780344 24580 12316 S 0.7 0.2 45:58.60 costor

4208 root 20 0 755756 22208 12176 S 0.7 0.1 41:49.58 costor

8665 root 20 0 210344 5960 3208 S 0.7 0.0 31:27.75 cont

現在、以下の状況が見つかりました。

Docker Storage Setupがシンプールの80%を使用するように構成されているため、kubeletのハードエビクションは10%でした。 どちらも機械加工ではありませんでした。

Dockerが何らかの形で内部的にクラッシュし、kubeletにこのPLEGエラーが発生しました。

kubeletのハードエビクション(imagefs.available)を20%に増やすと、Dockerのセットアップがヒットし、kubeletは古いイメージの削除を開始しました。

1.8では、image-gc-thresholdからhard-evictionに変更し、間違った一致するパラメーターを選択しました。

これについては、今すぐクラスターを観察します。

久部:1.8.5

Docker:1.12.6

OS:RHEL7

prometheusからの内部kubelet_pleg_relist_latency_microsecondsメトリックを見ると、これは疑わしいように見えます。

kopsはcoreOSでkube1.8.4をインストールしました

docker info

Containers: 246

Running: 222

Paused: 0

Stopped: 24

Images: 30

Server Version: 17.09.0-ce

Storage Driver: overlay

Backing Filesystem: extfs

Supports d_type: true

Logging Driver: json-file

Cgroup Driver: cgroupfs

Plugins:

Volume: local

Network: bridge host macvlan null overlay

Log: awslogs fluentd gcplogs gelf journald json-file logentries splunk syslog

Swarm: inactive

Runtimes: runc

Default Runtime: runc

Init Binary: docker-init

containerd version: 06b9cb35161009dcb7123345749fef02f7cea8e0

runc version: 3f2f8b84a77f73d38244dd690525642a72156c64

init version: v0.13.2 (expected: 949e6facb77383876aeff8a6944dde66b3089574)

Security Options:

seccomp

Profile: default

selinux

Kernel Version: 4.13.16-coreos-r2

Operating System: Container Linux by CoreOS 1576.4.0 (Ladybug)

OSType: linux

Architecture: x86_64

CPUs: 8

Total Memory: 14.69GiB

Name: ip-172-20-120-53.eu-west-1.compute.internal

ID: SI53:ECLM:HXFE:LOVY:STTS:C4X2:WRFK:UGBN:7NYP:4N3E:MZGS:EAVM

Docker Root Dir: /var/lib/docker

Debug Mode (client): false

Debug Mode (server): false

Registry: https://index.docker.io/v1/

Experimental: false

Insecure Registries:

127.0.0.0/8

Live Restore Enabled: false

+1

オリジンv3.7.0

kubernetes v1.7.6

docker v1.12.6

OS CentOS 7.4

ランタイムコンテナGCがポッドの作成と終了に影響を与えるようです

GCを無効にした後に何が起こったのかを報告してみましょう。

私の場合、CNIは状況を処理しません。

私の分析によると、コードシーケンスは次のとおりです

1. kuberuntime_gc.go: client.StopPodSandbox (Timeout Default: 2m)

-> docker_sandbox.go: StopPodSandbox

-> cni.go: TearDownPod

-> CNI deleteFromNetwork (Timeout Default: 3m) <- Nothing gonna happen if CNI doesn't handle this situation.

-> docker_service.go: StopContainer

2. kuberuntime_gc.go: client.RemovePodSandbox

StopPodSandboxはタイムアウト例外を発生させ、処理せずに戻ってポッドサンドボックスを削除します

ただし、StopPodSandboxがタイムアウトした後、CNIプロセスは進行中です。

これは、kubeletスレッドがCNIプロセスによって不足しているため、結果としてkubeletがPLEGを適切に監視できないようです。

この問題は、CNI_NSが空のときに戻るようにCNIを変更することで解決しました(ポッドがデッドであることを意味するため)。

(ところで、CNIプラグインとしてkuryr-kubernetesを使用しています)

これが皆さんのお役に立てば幸いです。